Introduction

Modern platform teams need to support fast-moving engineering orgs while controlling cloud costs, ensuring workload security, and maintaining operational sanity. But Kubernetes doesn’t make multi-tenancy easy.

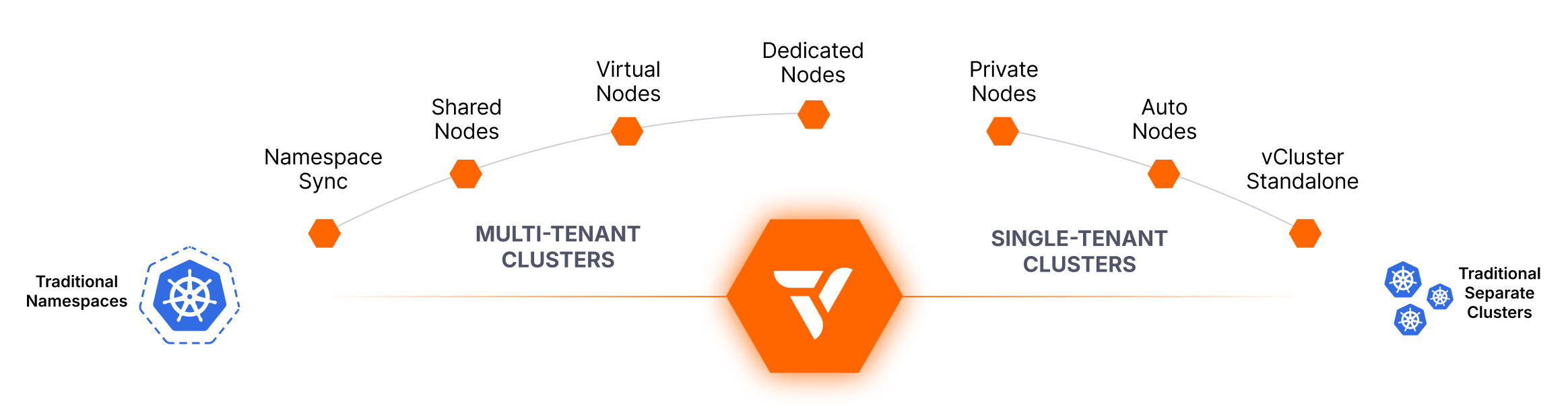

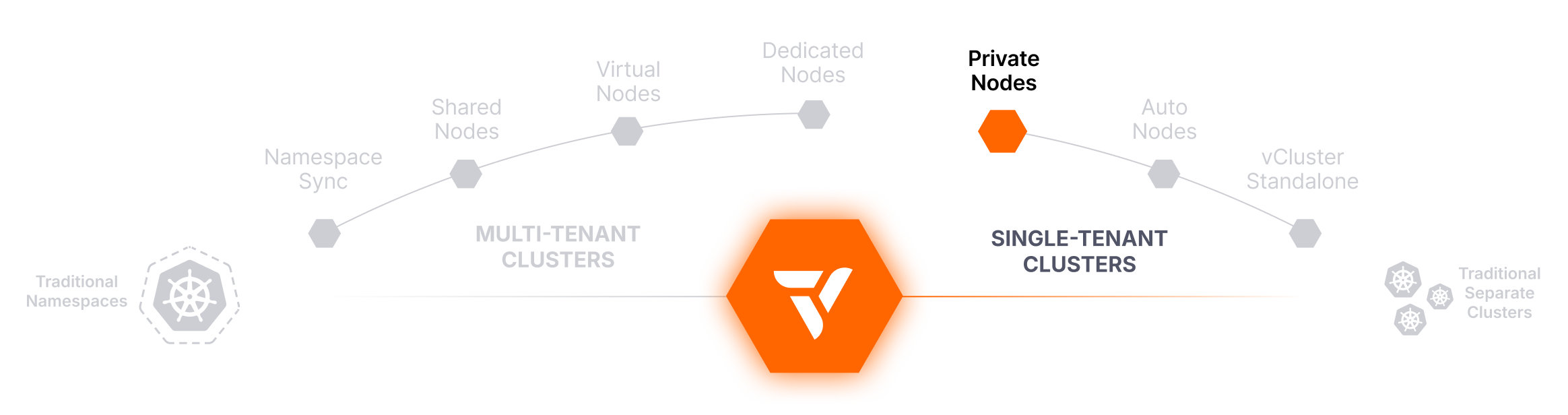

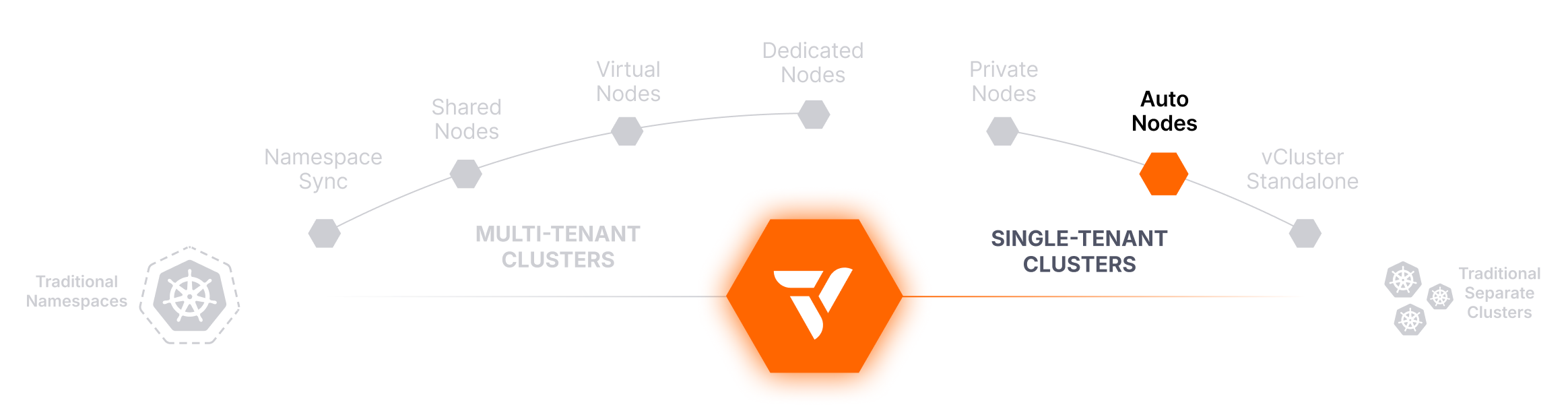

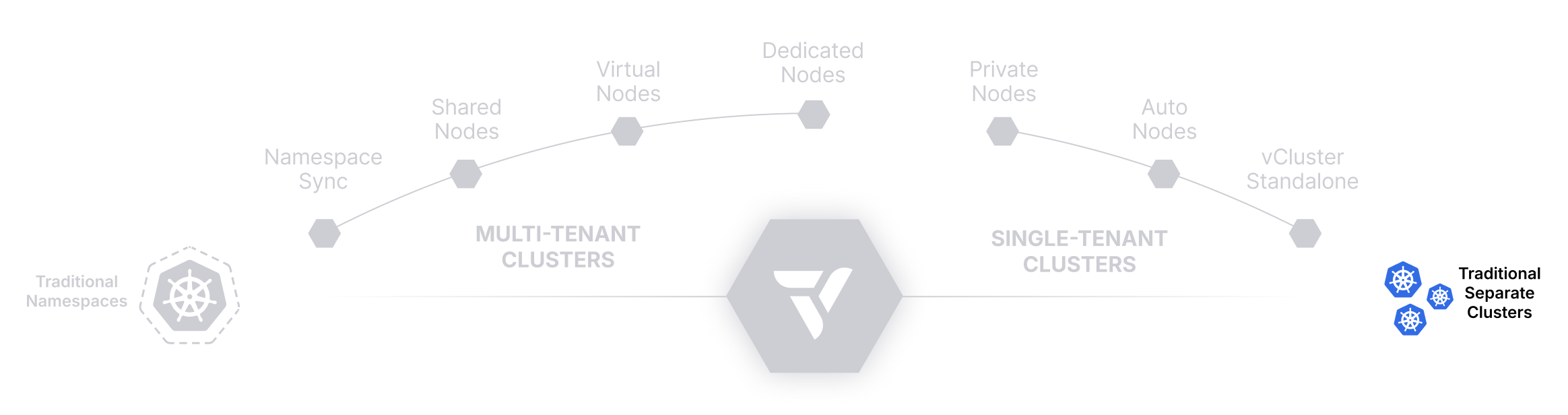

This guide breaks down the core tenancy models supported by vCluster—from lightweight namespace-based approaches to fully isolated per-tenant environments—and introduces platform-level capabilities like Auto Nodes that help you scale infrastructure intelligently.

Whether you’re running dev/test clusters, multi-tenant SaaS workloads, or GPU-intensive training pipelines, this guide can help you choose the right isolation strategy for every team, environment, or use case. Let’s get started!

Traditional Namespaces

Use Native Namespaces for Multi-Tenancy — Fast but Limited in Scope

Lightweight and simple, but lacks strong isolation. Ideal for small teams or environments where security boundaries aren’t a concern.

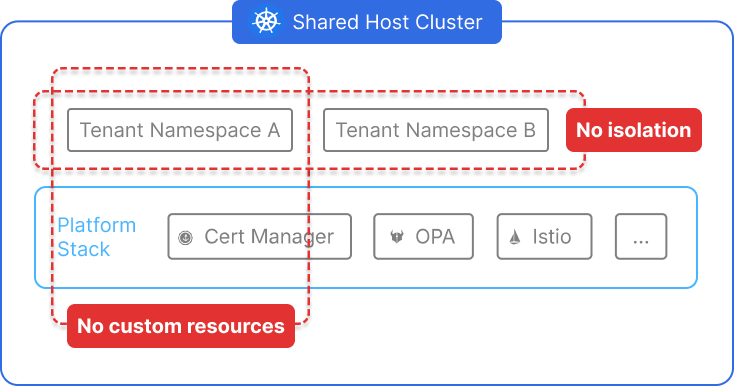

Kubernetes namespaces are the most basic and widely used model for multi-tenancy. Each team or tenant is assigned a unique namespace within a shared cluster. Platform teams can apply RBAC rules, network policies, and resource quotas to help segment access and limit usage within each namespace.

While this approach is quick to set up and uses only core Kubernetes primitives, it lacks any form of control plane or API isolation. All tenants share the same scheduler, CRDs, node pool, and cluster-wide configurations. As a result, namespaces are prone to issues like CRD conflicts, noisy neighbors, and accidental cross-tenant impacts—making them unsuitable for production-grade multi-tenancy at scale.

How It Works

Namespaces logically divide a Kubernetes cluster into smaller, scoped environments using native Kubernetes objects. Each tenant receives a namespace, and optional policies are layered on top:

- RBAC: Controls which users or service accounts can access resources in a namespace.

- ResourceQuotas: Prevent a single tenant from consuming all compute resources.

- Network Policies: Can restrict traffic between namespaces.

All tenants continue to use the same underlying control plane, scheduler, node pool, and extensions. Cluster-wide resources like CRDs and webhooks are still global, and a misconfigured tenant can easily impact others.

Why It’s Valuable

- Zero Overhead: No extra tooling or provisioning required; works in any Kubernetes cluster.

- Quick Setup: Namespaces can be created instantly and managed via GitOps or CLI.

- Low Complexity: Platform teams don’t have to manage clusters or additional isolation layers.

- Familiar to Devs: Developers often already understand namespaces from past projects.

- Resource Visibility: Cluster admins can centrally observe and manage all workloads.

Challenges with This Approach

- No Control Plane Isolation: One misconfigured tenant can affect the entire cluster.

- CRD and API Conflicts: Tenants can’t define conflicting CRDs, admission controllers, or webhooks.

- Security Gaps: Enforcing true isolation via policies is difficult and error-prone.

- Limited Customization: Tenants can’t use their own controllers, operators, or tooling.

- Noisy Neighbors: Resource spikes from one tenant can degrade performance for others.

These limitations make traditional namespaces a poor fit for environments with strong isolation, customization, or scalability requirements.

Best-Fit Use Cases

- Internal or small teams where trust boundaries are weakly enforced

- CI/CD pipelines or ephemeral dev workloads

- Proof-of-concept environments with no regulatory or security concerns

- Organizations just getting started with Kubernetes multi-tenancy

Isolation Characteristics

Compatibility

- ✅ Works in every Kubernetes cluster out of the box

- 🔶 Can be layered with policies for soft isolation

- ❌ No CRD or API isolation — conflicts can occur

- ❌ No tenant-level access to install controllers or custom webhooks

- ❌ Shared failure domains and limited customization

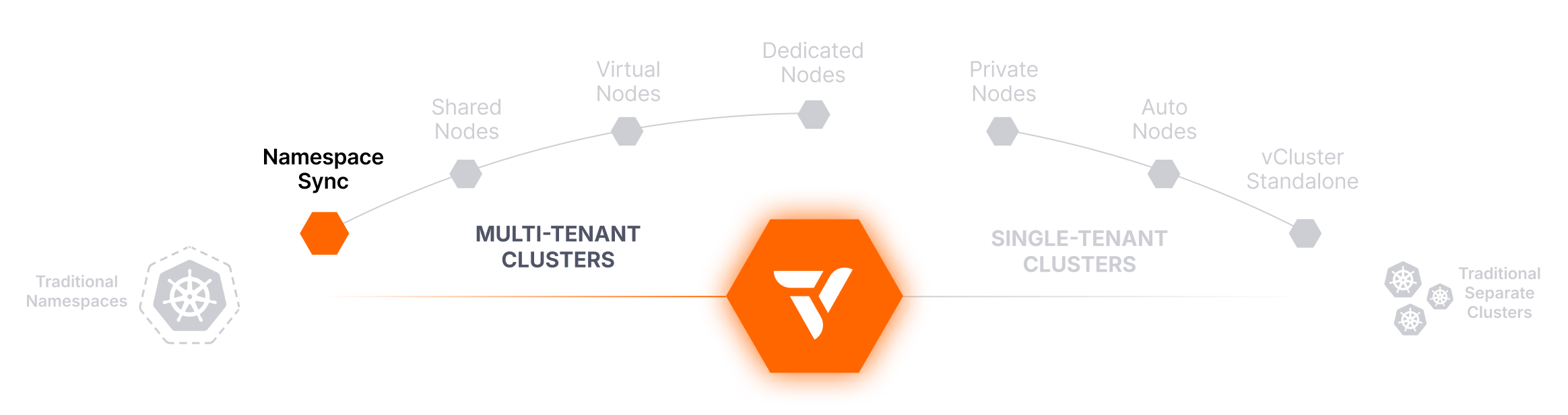

Namespace Syncing

Sync Tenant Namespaces to a Virtual Cluster — Lightweight Isolation, No Infrastructure Changes

Bring existing namespaces under vCluster management without disrupting workloads or provisioning new clusters.

Namespace Syncing allows you to connect existing Kubernetes namespaces from the host cluster to a virtual cluster, making it one of the fastest and most flexible ways to adopt vCluster without changing how workloads are deployed. This approach lets you treat an existing namespace as a fully isolated virtual cluster from an access, API, and control perspective—without relocating workloads or provisioning additional infrastructure.

With Namespace Syncing, platform teams can gradually migrate to vCluster without impacting running workloads. Tenants gain virtual cluster benefits like isolated API access, scoped RBAC, and custom CRDs, while underlying compute remains shared and existing namespace-level policies stay in place.

How It Works

When a vCluster is created with namespace syncing enabled, it establishes a logical connection between a namespace in the host cluster and the virtual cluster’s control plane. All Kubernetes resources created within the virtual cluster are automatically synced into the specified namespace on the host cluster.

The synced namespace continues to use the host’s node pool, network infrastructure, and storage. However, tenants interact with the virtual cluster as if it were a dedicated environment—with their own CRDs, RBAC policies, service accounts, and access controls—enforced by the vCluster layer.

Why It’s Valuable

- Fast Adoption Path: Migrate workloads into vCluster without moving or re-deploying anything.

- Low Infrastructure Overhead: Uses the same host cluster and namespace structure already in place.

- Control Plane Isolation: Tenants get their own API server and control mechanisms.

- Customizability: Tenants can define their own CRDs and controllers without impacting others.

- Ideal for Gradual Migration: Enables step-by-step adoption of vCluster for existing namespace-based multi-tenancy.

Challenges with This Approach

- Shared Underlying Infrastructure: Tenants still share the same nodes, network, and storage.

- Limited Isolation Guarantees: Network and security policies must still be enforced at the namespace level.

- Potential Misalignment: Some policies applied at the host namespace may conflict with vCluster user expectations.

- No Node or Resource Pooling Separation: All workloads still compete for the same physical compute.

Namespace Syncing offers a strong improvement over traditional namespaces, but it still inherits some of the same infrastructure-level limitations.

Best-Fit Use Cases

- Teams already using namespace-based tenancy that want better isolation

- Organizations migrating to vCluster incrementally

- Workloads that don’t need node isolation but benefit from API or RBAC separation

- Internal platforms that want to give tenants full CRD/custom resource access

Isolation Characteristics

Compatibility

- ✅ Works with existing namespaces in any Kubernetes cluster

- ✅ Supports all vCluster control plane features

- ✅ No need to migrate workloads or change deployment models

- 🔶 Still requires network and quota policies for full tenant separation

- ❌ Not suitable for strict security or compliance environments without additional controls

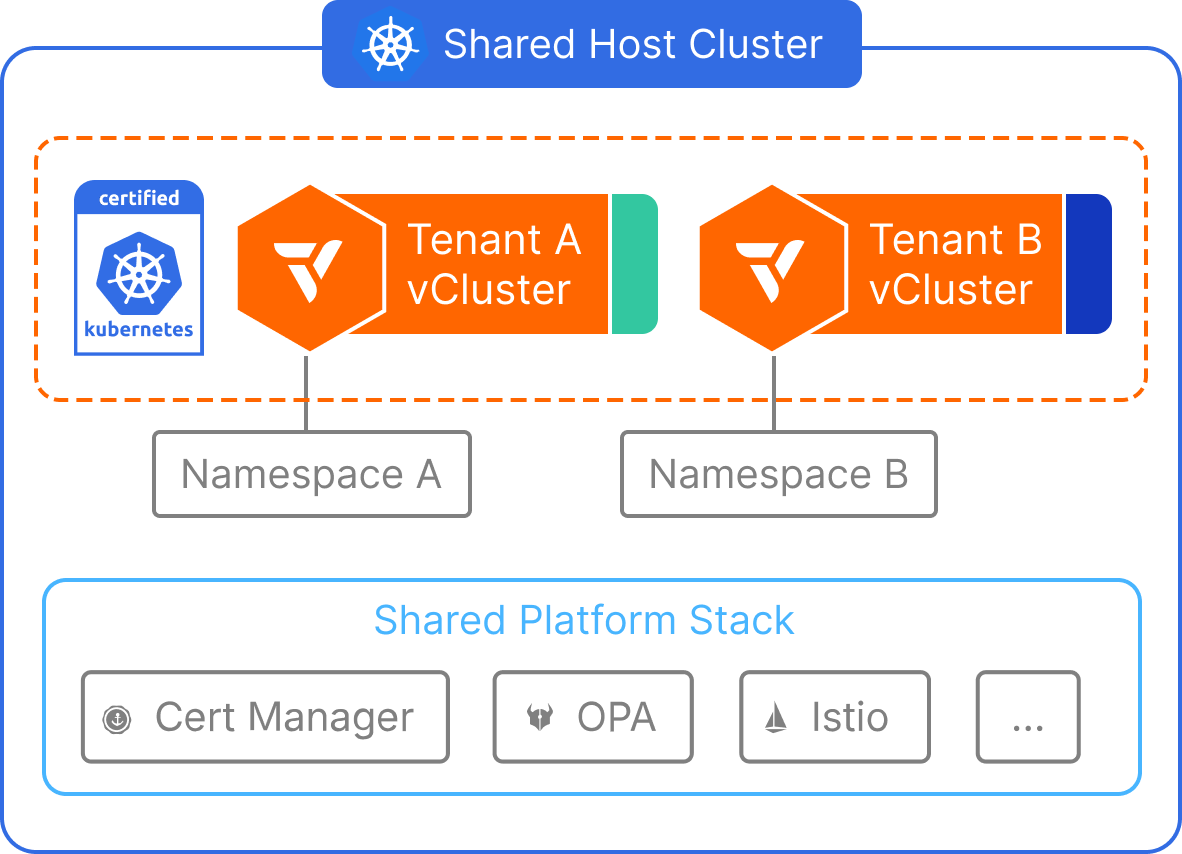

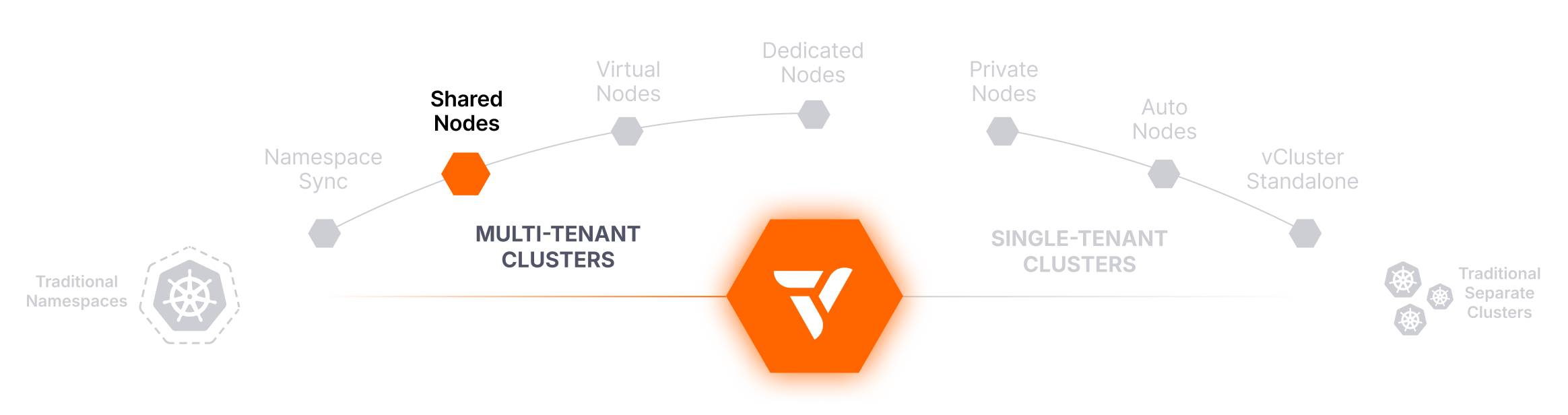

Shared Nodes

Run Virtual Clusters on Shared Nodes — Maximize Density, Minimize Waste

Best for efficient compute usage across multiple virtual clusters, especially in dev/test environments.

The Shared Nodes tenancy model allows multiple virtual clusters to run workloads on the same physical Kubernetes nodes. This configuration is ideal for scenarios where maximizing resource utilization is a top priority—especially for internal developer environments, CI/CD pipelines, and cost-sensitive use cases.

Each virtual cluster has its own isolated control plane, API server, and CRDs, but workloads are scheduled without node-level isolation. This setup helps platform teams deliver the benefits of vCluster (like per-tenant customization and faster provisioning) while minimizing infrastructure costs by sharing underlying compute across all tenants.

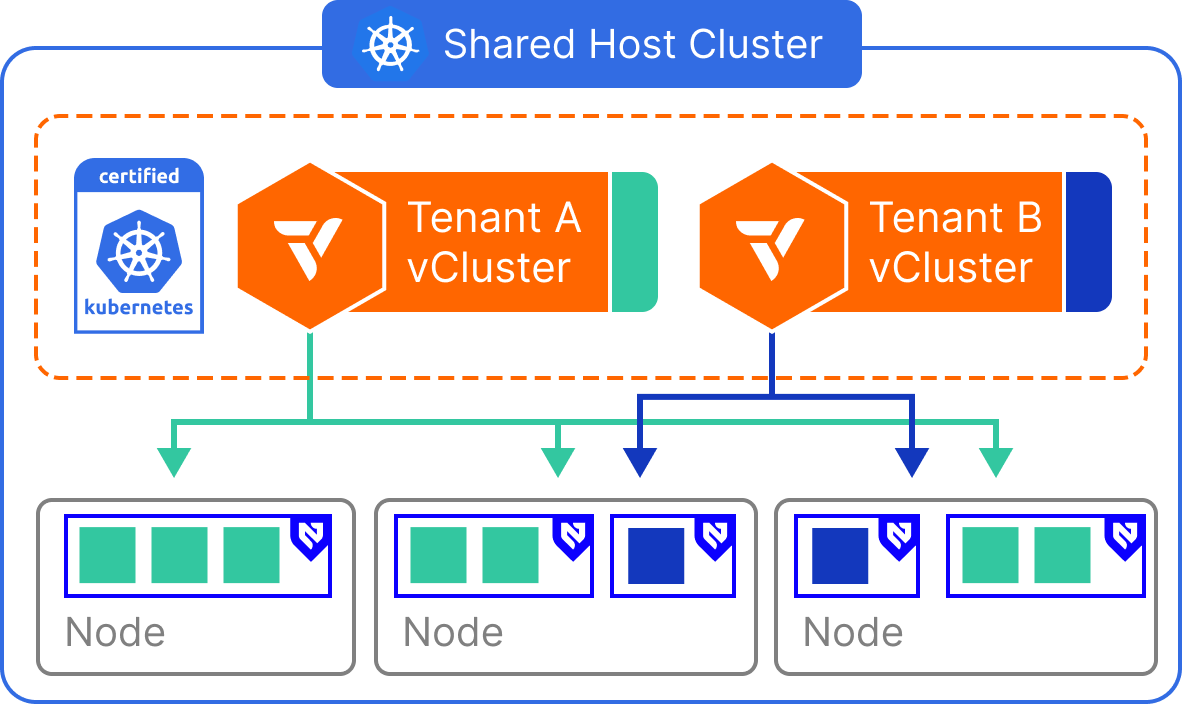

How It Works

All virtual clusters run in a single Kubernetes host cluster and schedule pods onto the same shared node pool. The vCluster control plane enforces separation at the API, RBAC, and CRD levels, but does not restrict pod scheduling unless additional mechanisms (e.g., taints, affinities) are applied.

Tenants interact with their own virtual clusters as if they are separate environments, but their workloads run side-by-side with those from other vClusters at the node level. Shared infrastructure components like the container runtime, CNI, and CSI drivers are used across all tenants.

Why It’s Valuable

- High Efficiency: Minimizes unused capacity and improves node utilization.

- Low Cost: No need to overprovision for every tenant—compute is pooled and shared.

- Fast Provisioning: Virtual clusters can be spun up instantly without any node-specific configuration.

- Supports Burst Workloads: Perfect for CI, test environments, or ephemeral developer clusters.

- Full vCluster Benefits: Tenants still receive isolated control planes, CRD freedom, and scoped access.

Challenges with This Approach

- No Node Isolation: Workloads from different tenants can impact each other’s performance (noisy neighbors). Virtual nodes can help if more isolation is a requirement.

- Security Considerations: Tenants share the same CNI, CSI, and host OS—security policies must be enforced manually.

- Resource Quotas Are Optional: Without tight quota management, one tenant can consume excess compute.

- Not Ideal for Regulated Workloads: Lacks the separation required for compliance-heavy environments.

This model is best when speed and density matter more than strict isolation guarantees.

Best-Fit Use Cases

- Internal developer environments with high churn

- CI pipelines or test jobs that run in parallel

- Shared staging environments with limited budget

- SaaS platforms offering low-cost tenant tiers

Isolation Characteristics

Compatibility

- ✅ Works with Sleep Mode, Auto Wakeup, and Auto Delete

- ✅ Supported in all Kubernetes clusters

- ✅ Full CRD and API isolation per virtual cluster

- 🔶 Requires quota enforcement and network policies

- ❌ Not intended for workloads requiring compute or kernel-level separation

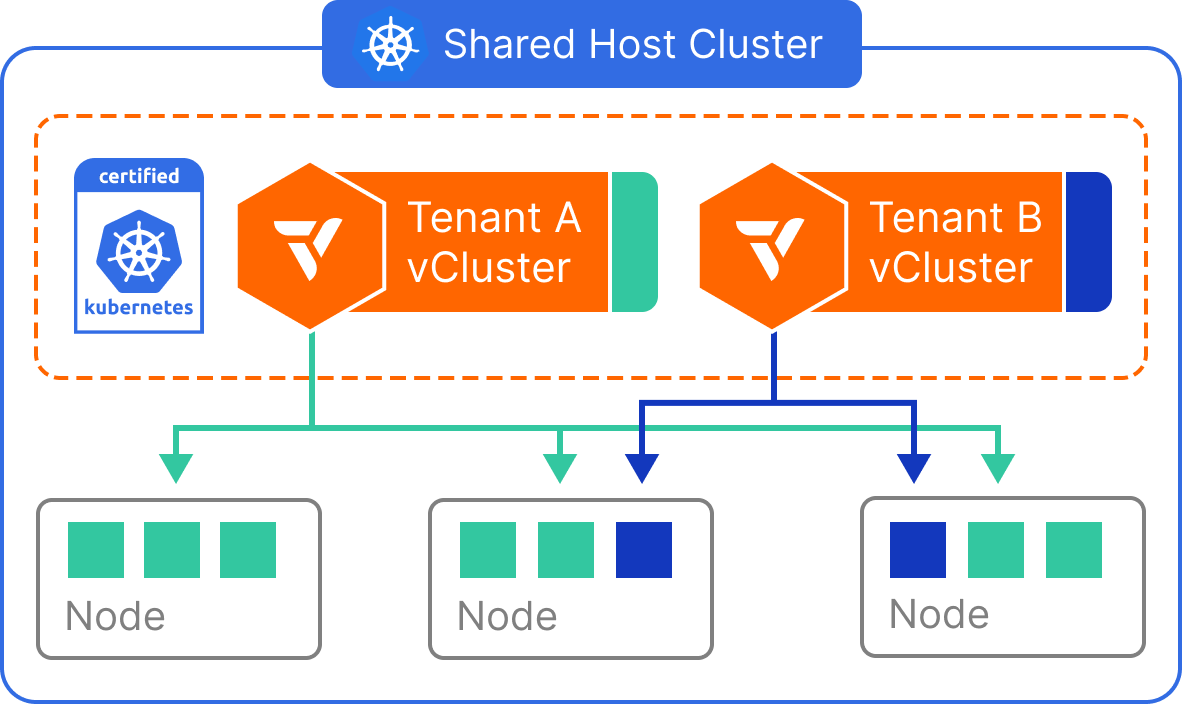

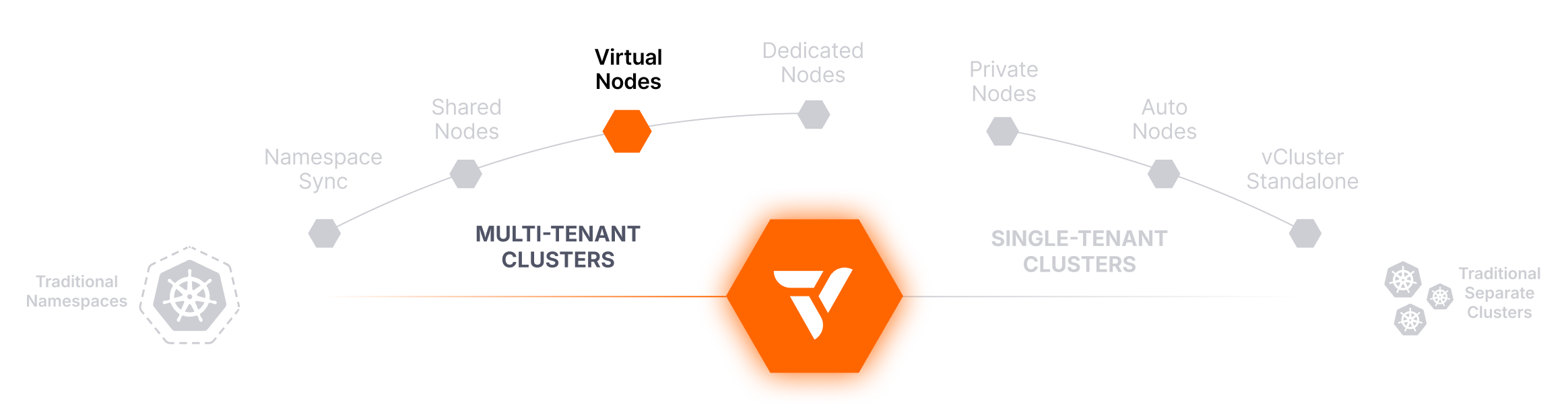

Virtual Nodes

Enforce Tenant Boundaries at the Node Level — Without Dedicating Physical Infrastructure

Virtualizes node boundaries for enhanced security and separation inside a shared cluster.

Virtual Nodes provide an effective way to isolate tenant workloads without allocating dedicated physical nodes per tenant. This model leverages virtualization at the node level—through vNode—to create strong scheduling boundaries while continuing to share the underlying infrastructure.

Each virtual cluster receives its own control plane and interacts with a virtualized view of the node environment. Workloads are scheduled into tenant-scoped virtual nodes, which are translated into actual pods on the shared cluster. This allows teams to achieve node-level isolation semantics, including taints and tolerations, without managing separate node pools.

How It Works

Virtual Nodes are implemented within vCluster by inserting a translation layer between the virtual control plane and the physical cluster. From the perspective of a tenant, the vCluster presents one or more virtual nodes, each representing a safe, scoped execution environment.

Internally, workloads from these virtual nodes are scheduled as regular pods on shared physical nodes, but the tenant does not see or interact with the real underlying node environment. This abstraction enables stronger isolation, enforces placement boundaries, and prevents tenants from seeing or interacting with each other’s workloads—even though compute is technically shared.

Why It’s Valuable

- Node Boundary Abstraction: Tenants can’t see or schedule outside of their virtual node scope.

- Security & Compliance Friendly: Helps enforce workload boundaries without physical isolation.

- No Cluster Duplication Needed: Offers isolation benefits without spinning up separate node pools.

- Supports Existing Kubernetes Patterns: Tenants can use scheduling features like affinities or anti-affinities.

- Improved Tenant Safety: Reduces exposure to noisy neighbors and unauthorized scheduling.

Challenges with This Approach

- Still Uses Shared Compute: Physical resources are shared; this is not a hardware-level boundary.

- Added Abstraction Layer: Virtual node logic adds complexity to scheduling and debugging.

- Resource Overlap Is Still Possible: Without proper quota controls, tenants may compete for compute.

- Needs Proper Policy Enforcement: Virtual isolation doesn’t prevent abuse unless reinforced with quota and security policies.

This tenancy mode is ideal when you need workload separation but want to avoid operational and cost overhead from dedicated infrastructure.

Best-Fit Use Cases

- Multi-tenant platforms that want lightweight but effective isolation

- Internal Kubernetes platforms offering self-service environments

- Environments with security-conscious tenants but shared compute models

- Mid-sized teams that need boundaries between dev, staging, and test

Isolation Characteristics

Compatibility

- ✅ Fully compatible with vCluster

- ✅ Supports Sleep Mode, Auto Wakeup, and custom CRDs

- ✅ Allows scheduling constructs like affinities and taints

- 🔶 Requires quota enforcement to prevent noisy neighbor effects

- ❌ Not sufficient for workloads requiring physical node or kernel separation

Dedicated Nodes

Assign Virtual Clusters to Specific Node Pools — Dedicated Compute Without Cluster Sprawl

Provides node-level compute separation by targeting labeled node groups within a shared cluster.

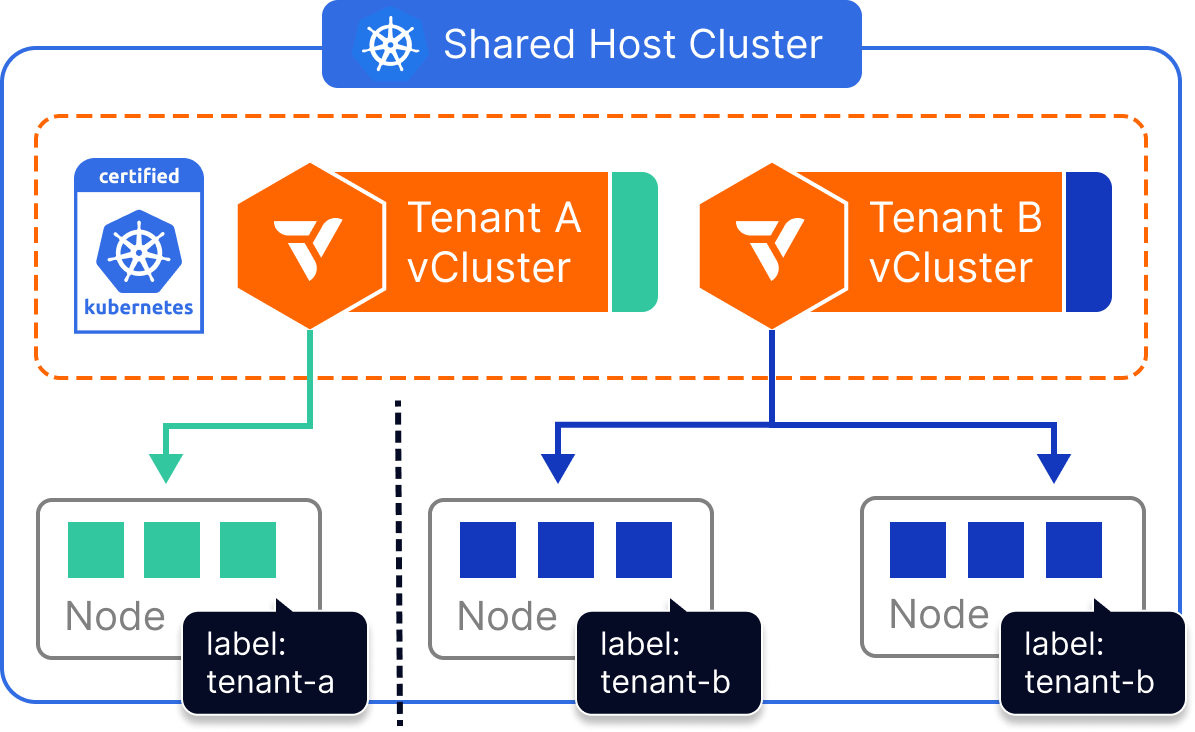

Dedicated Nodes allow platform teams to give each virtual cluster exclusive access to a set of physical nodes—without having to provision entirely separate clusters. By combining vCluster’s multi-tenant architecture with Kubernetes node selectors, workloads from each virtual cluster can be scoped to a specific group of labeled nodes, ensuring compute separation across tenants.

This approach enables strong operational boundaries and predictable performance, while maintaining all the benefits of shared infrastructure. It’s especially effective for teams who want dedicated compute for certain tenants, environments, or workloads—without duplicating every part of the platform stack.

How It Works

Each vCluster is configured with a Kubernetes nodeSelector (or affinity rules) that ensures all tenant workloads are scheduled only to nodes with specific labels. For example, a virtual cluster assigned to nodegroup=tenant-a will only run pods on nodes matching that label.

While compute is scoped to these dedicated nodes, all other components—like the CNI, CSI, and underlying Kubernetes host cluster—remain shared. The vCluster itself maintains full API isolation, separate CRDs, tenant-specific RBAC, and control plane security.

Why It’s Valuable

- Precision Scheduling: Route tenant workloads to specific node pools based on cost, workload type, or team ownership.

- Cost-Efficient Isolation: Avoids the overhead of full cluster duplication while maintaining compute separation.

- Flexible Rebalancing: Node assignments can be changed dynamically by relabeling or updating selector rules.

- Compatible with Autoscaling: Use dynamic node pools that scale up/down based on vCluster workload demand.

- Retains Full vCluster Benefits: Control plane isolation, tenant-level CRDs, and access control still apply.

Challenges with This Approach

- Shared Platform Layer: Tenants still share the same CNI, CSI, and Kubernetes host infrastructure.

- Label Enforcement Required: Security depends on ensuring workloads stay within their assigned nodes.

- Manual Oversight Needed: Mislabeling or skipped selectors could result in accidental cross-tenant scheduling.

- Policy Backing Recommended: Use admission controls, automation, or templates to avoid human error.

Dedicated Nodes strike a balance between hard separation and infrastructure efficiency, but require thoughtful governance and policy design.

Best-Fit Use Cases

- Teams with predictable compute needs and tenant-specific node pools

- Production workloads needing dedicated capacity without separate clusters

- ML, GPU, or high-performance compute workloads

- Workloads where performance isolation matters but full platform duplication is overkill

Isolation Characteristics

Compatibility

- ✅ Works with Sleep Mode, Auto Wakeup, and Auto Delete

- ✅ Configurable via vcluster.yaml or Helm

- ✅ Supports taints, tolerations, affinities, and autoscaling

- 🔶 Requires label enforcement via policies or automation

- ❌ Not intended for full platform isolation (see Private Nodes instead)

Private Nodes

Provision Fully Isolated Clusters for Tenants — Separate CNI, CSI, and Compute

Delivers maximum tenant isolation with dedicated clusters, ideal for regulated or production-critical environments.

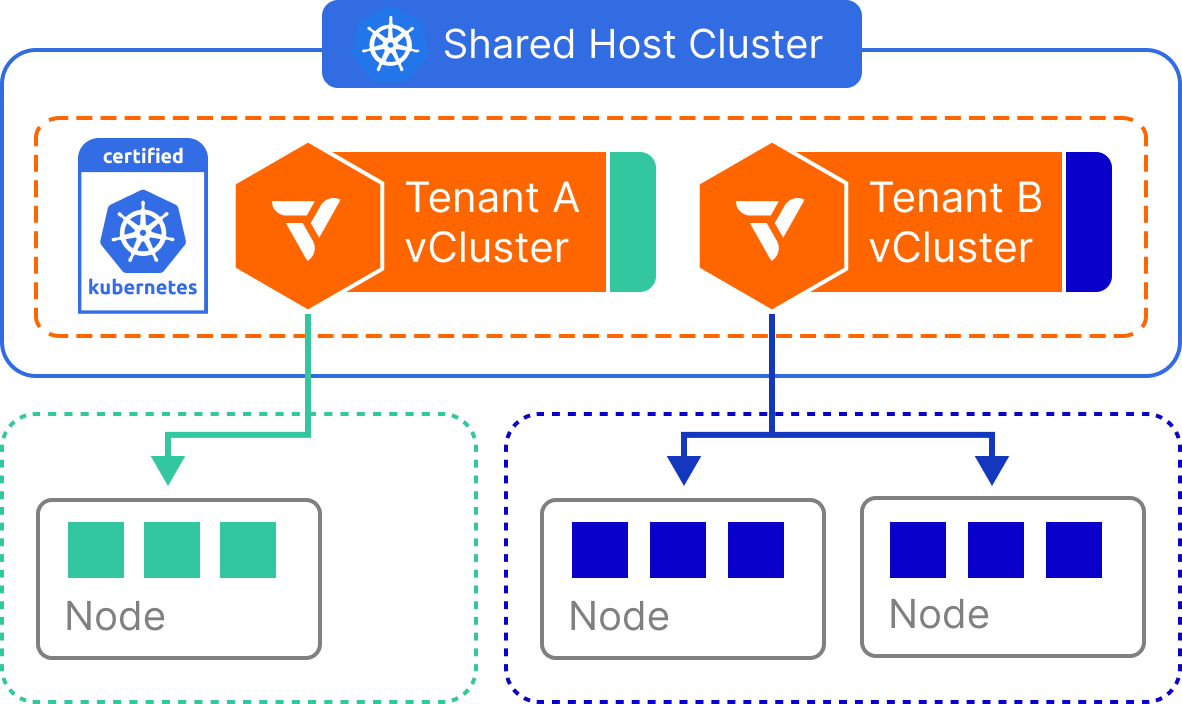

Private Nodes provide the strongest isolation among vCluster tenancy models. In this setup, each virtual cluster runs inside its own dedicated Kubernetes host cluster—backed by physically separate nodes, a separate control plane, and separate infrastructure components like CNI and CSI drivers. From the tenant’s perspective, the environment behaves like a single-tenant Kubernetes cluster, with all platform services fully isolated.

This approach ensures that no tenant shares compute, networking, or storage with others. It’s best suited for highly sensitive workloads that require strict compliance, regulatory boundaries, or strong guarantees around performance, network isolation, and tenant autonomy.

How It Works

Each vCluster is deployed into its own Kubernetes host cluster, provisioned with a dedicated set of physical nodes. The CNI, CSI, kube-proxy, and all other Kubernetes components are fully isolated per tenant.

Because vCluster runs on top of this separate host cluster, it inherits the benefits of virtual cluster abstraction (faster startup, CRD freedom, sleep mode, etc.), but adds an additional hard isolation boundary beneath it. Tenants cannot interfere with one another’s environments at any layer—from API server to node kernel.

Why It’s Valuable

- Maximum Security: Full isolation across every infrastructure layer—compute, network, and control plane.

- Compliance Ready: Meets requirements for regulatory environments like finance, healthcare, or government.

- Customizable Per Tenant: Each tenant can run their own platform stack or runtime configurations.

- No Noisy Neighbors: Guaranteed performance isolation at the node and kernel level.

- Platform Hardening: Enables per-tenant upgrades, maintenance windows, or tuning.

Challenges with This Approach

- Operational Overhead: Requires provisioning and managing multiple full clusters.

- Higher Cost: Dedicated infrastructure increases baseline compute and storage spend.

- Complexity at Scale: As tenant count grows, cluster sprawl and platform duplication can become burdensome.

- Slower Startup Time: New environments depend on full cluster provisioning or automation readiness.

This model is ideal when tenant safety and independence are more important than resource efficiency.

Best-Fit Use Cases

- Highly regulated workloads (e.g., financial services, healthcare, public sector)

- Multi-tenant SaaS offerings with strict security or compliance contracts

- Internal platforms with high-value or mission-critical workloads

- Customers who need per-tenant customization, SLAs, or upgrades

Isolation Characteristics

Compatibility

- ✅ Works with hosted or standalone control planes

- ✅ Supports full vCluster feature set

- ✅ CRDs, operators, and workloads are fully tenant-scoped

- 🔶 Requires automation to manage cost and sprawl

- ❌ Not ideal for bursty or ephemeral workloads due to infra cost

Auto Nodes

Dynamic Node Autoscaling, Anywhere You Run Kubernetes

Unlock cloud-style elasticity across virtual clusters in public cloud, private cloud, and bare metal with embedded Karpenter.

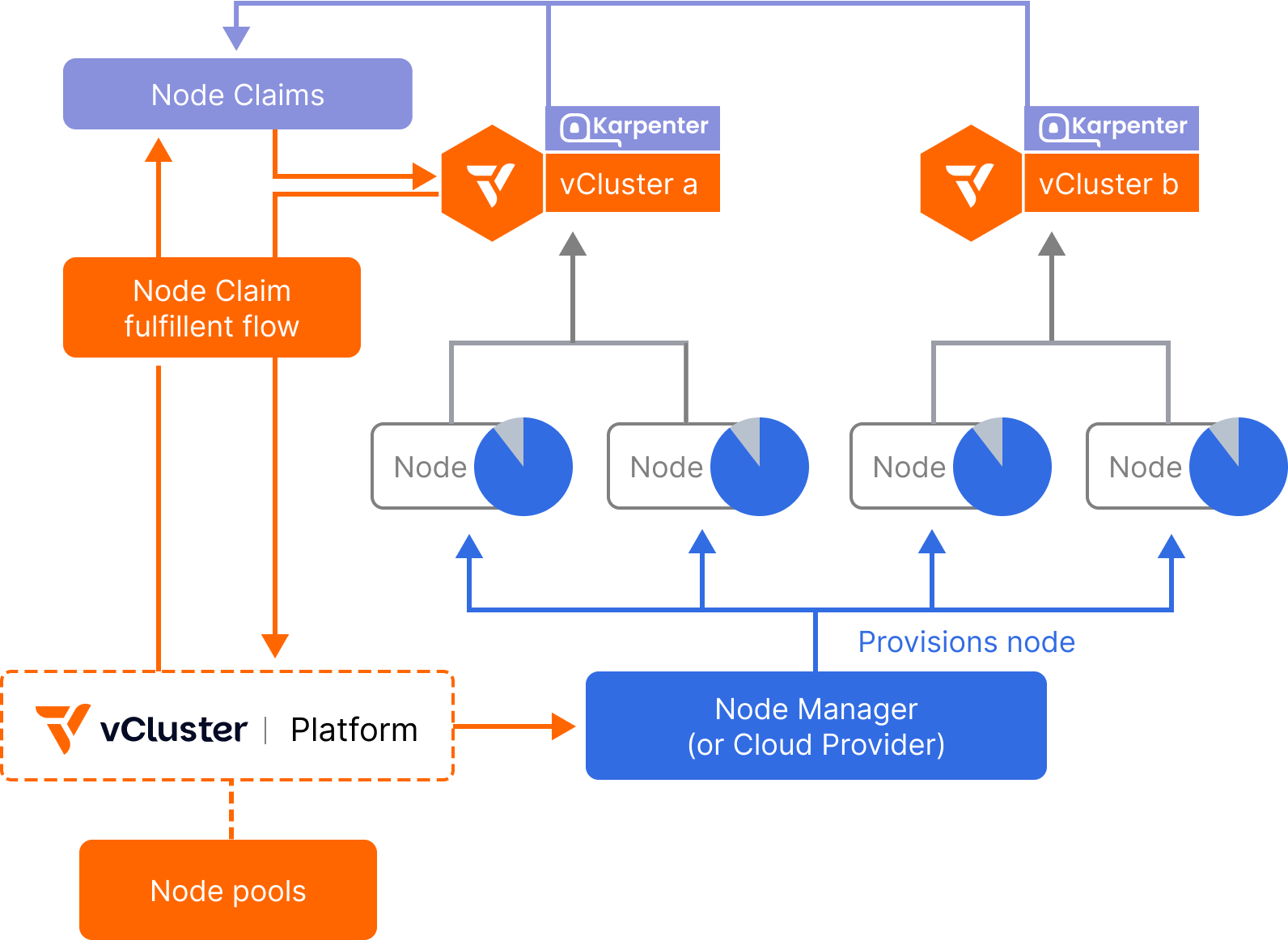

Auto Nodes integrates a managed instance of Karpenter directly inside each virtual cluster, transforming it into a fully isolated autoscaling unit. With dynamic provisioning and deprovisioning of compute across hybrid, multi-cloud, and even bare metal environments, platform teams can now scale workloads elastically, without over-provisioning or vendor lock-in.

Each virtual cluster can trigger the creation of NodeClaims, which the platform fulfills by dynamically scaling up the appropriate node pool, whether that means launching traditional cloud VMs, bare metal PXE-booted nodes, or virtualized environments like vNode or KubeVirt. Underutilized nodes are automatically drained and returned to the shared pool, improving efficiency and reducing cloud spend.

How It Works

The vCluster Platform runs an embedded, fully configurable instance of Karpenter that listens to scheduling pressure and provisioning needs from all connected virtual clusters. When a virtual cluster’s scheduler detects the need for more compute (e.g., due to a pending pod), it submits a NodeClaim to the platform.

The platform’s Node Manager fulfills these claims by selecting from available Node Pools, each defined by a set of instance types, node classes, or backing infrastructure (e.g., GPU, ARM, x86, bare metal). Nodes are joined dynamically to the host cluster and assigned to the requesting vCluster.

Once the workload completes or scales down, unused nodes can be automatically removed, rebalanced, or reused by other virtual clusters.

Why It’s Valuable

- Avoid Cloud Lock-In: Move workloads across providers or datacenters without changing your workload or cluster structure.

- Elastic Compute for Isolated Workloads: No more trade-offs between hard isolation and flexible scaling.

- Infrastructure-Agnostic: Supports autoscaling across cloud, private data center, GPU clusters, or hybrid setups.

- Better Cost Efficiency: Auto Nodes eliminate idle capacity by deprovisioning compute on demand.

Challenges with This Approach

- Shared Pools Add Complexity: In multi-tenant setups, you must balance fairness, priority, and scale limits.

- Requires Intelligent Node Pool Design: NodePools must be designed for tenant needs and workloads.

- Observability is Critical: Teams need visibility into node claims, scaling events, and pool usage.

- Initial Setup Involves Tuning: Configuration of Karpenter, limits, taints, and budgets requires careful planning.

Despite these, the payoff is massive in terms of scalability, efficiency, and automation.

Best-Fit Use Cases

- Large-scale environments with multiple bursty virtual clusters

- GPU workload orchestration and intelligent bin-packing

- CI/CD workloads that fluctuate heavily

- Bare metal node management in on-prem or hybrid environments

- Environments requiring cost-effective scaling of production and dev workloads

Isolation Characteristics

Compatibility

- ✅ Works with all vCluster features: Sleep Mode, Auto Wakeup, vCluster.yaml, etc.

- ✅ Supports GPU/CPU constraints, NodeClasses, and NodePools.

- ✅ Integrates with OpenTofu/Terraform, BCM, KubeVirt, and custom providers.

- 🔶 Requires provisioning access to target infrastructure.

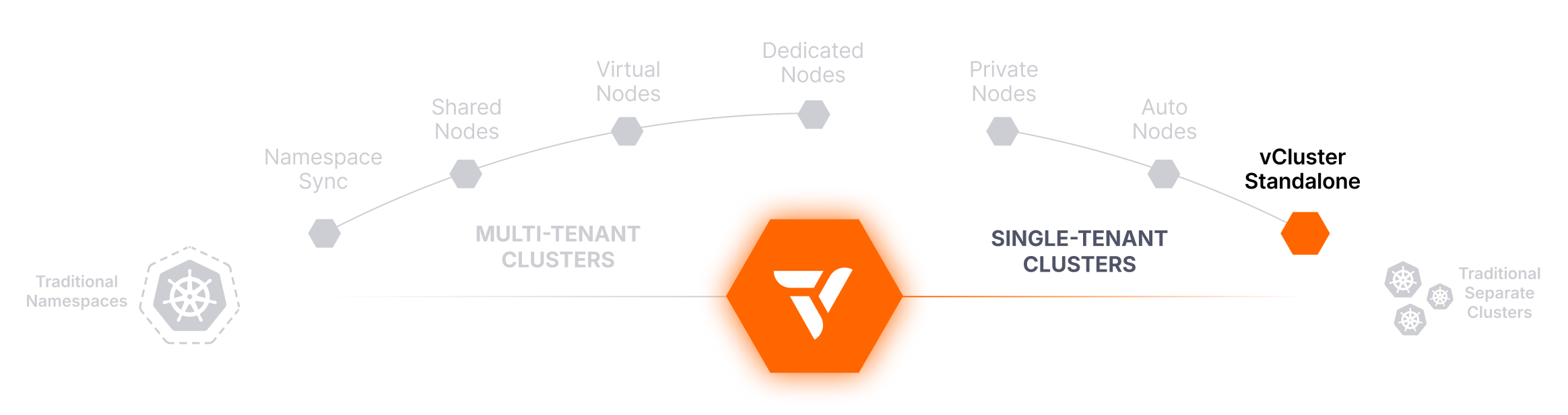

vCluster Standalone

Run Virtual Clusters Without a Host — vCluster Standalone for Maximum Portability

Spin up lightweight virtual clusters anywhere, without relying on a Kubernetes host cluster.

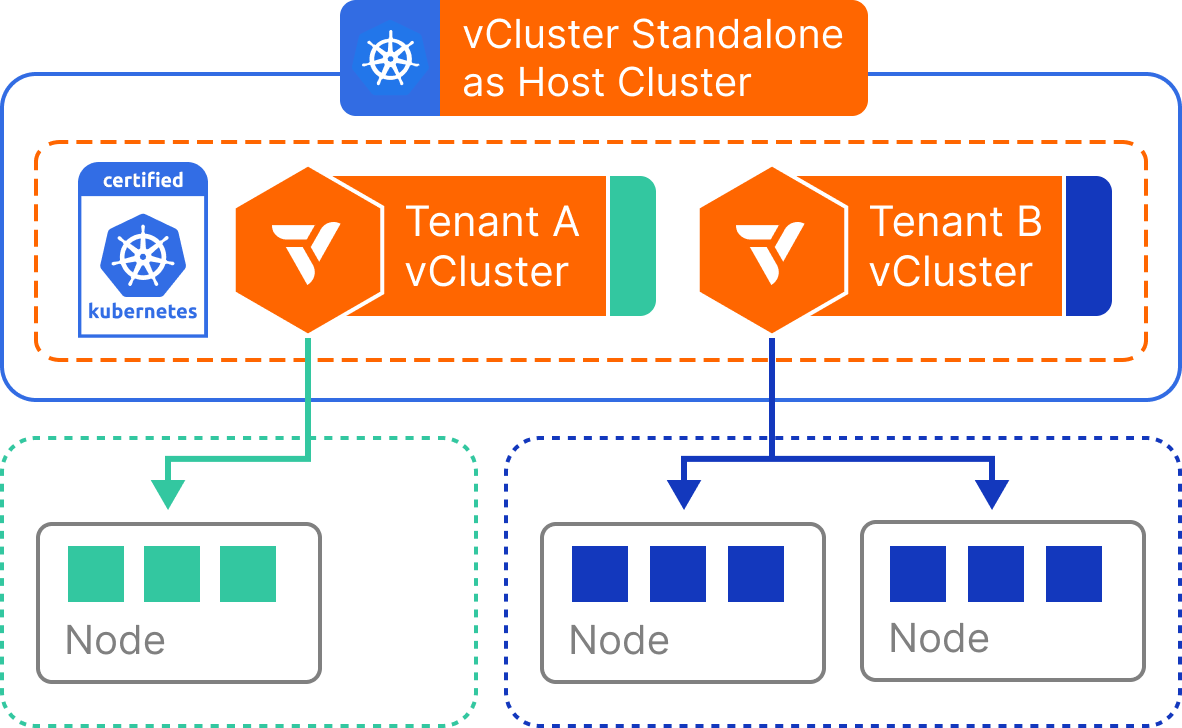

Standalone vClusters eliminate the need for a pre-existing Kubernetes host cluster. Instead, the virtual cluster runs as a fully self-contained process—typically inside a single container or VM—capable of bootstrapping its own control plane and simulating the Kubernetes environment.

This model is ideal for use cases where speed, portability, or independence are paramount. Whether you’re running workloads in CI pipelines, demos, local development setups, or air-gapped environments, Standalone vCluster offers a fast, lightweight way to provision isolated Kubernetes environments without relying on cluster-level infrastructure or orchestration.

How It Works

A Standalone vCluster launches its own lightweight control plane components (like a virtual API server and virtual scheduler) and backs workloads with a local Kubernetes-compatible runtime. Since no Kubernetes host cluster is required, virtual clusters can run independently in a container, virtual machine, or even on a developer laptop.

Despite being self-contained, a Standalone vCluster behaves just like a regular Kubernetes control plane: it supports custom resources, RBAC, workload scheduling, and even integrations with CI/CD tools. Workloads are managed entirely within the virtual cluster and do not require a physical cluster node pool to function.

Why It’s Valuable

- No Host Cluster Required: Reduces infrastructure dependencies—just run it anywhere.

- Extreme Portability: Ideal for isolated or resource-constrained environments.

- Fast Startup Time: Virtual clusters can be launched in seconds, perfect for ephemeral use.

- Great for Demos and CI: Simplifies Kubernetes bootstrapping in sandbox or preview workflows.

- Developer-Friendly: Makes Kubernetes accessible without needing cluster credentials or complex setup.

Challenges with This Approach

- No Real Workload Scheduling: Lacks backing nodes unless integrated with a runtime.

- Limited Multi-Tenancy Scalability: Designed for small, scoped environments, not shared production platforms.

- Environment Gaps: Not all Kubernetes features are available in every runtime or container setup.

- Not for Long-Running Workloads: Best suited for short-lived use, like tests or previews.

vCluster Standalone is powerful for portability, but isn't a replacement for full-scale multi-tenant infrastructure.

Best-Fit Use Cases

- Local development or sandbox environments

- CI/CD pipelines needing Kubernetes clusters-on-demand

- Educational workshops or Kubernetes onboarding

- Isolated systems (e.g., air-gapped or offline environments)

- Quick demos, previews, or GitOps bootstrapping

Isolation Characteristics

Compatibility

- ✅ Works without a Kubernetes host cluster

- ✅ Fully supports CRDs, RBAC, and virtual cluster API behavior

- ✅ Integrates into CI tools, containers, and developer machines

- 🔶 Limited workload execution unless connected to a backing runtime

- ❌ Not designed for shared, long-lived, or production-scale usage

Traditional Separate Clusters

Provision a Cluster Per Tenant — Strong Isolation, Heavy Overhead

The traditional method for tenant separation. Offers full control and security at the cost of complexity, duplication, and scalability challenges.

Provisioning a fully separate Kubernetes cluster for each tenant is the most well-understood and straightforward form of multi-tenancy. Every tenant gets their own physical or virtual cluster—including a dedicated control plane, node pool, and platform services like CNI, CSI, monitoring, and logging.

While this model offers the strongest isolation guarantees, it comes with substantial operational and financial cost. It often leads to cluster sprawl, duplicated effort across environments, and growing platform complexity as the number of tenants increases.

This approach remains common in regulated industries and traditional enterprise settings, but it is increasingly being replaced by more scalable, efficient alternatives, like vCluster.

How It Works

Each tenant receives its own Kubernetes cluster, either provisioned manually or via infrastructure-as-code tools (e.g., Terraform, Cluster API, cloud-native APIs). All cluster resources are isolated by design: tenants cannot interact with each other, and workloads are scheduled to entirely separate compute environments.

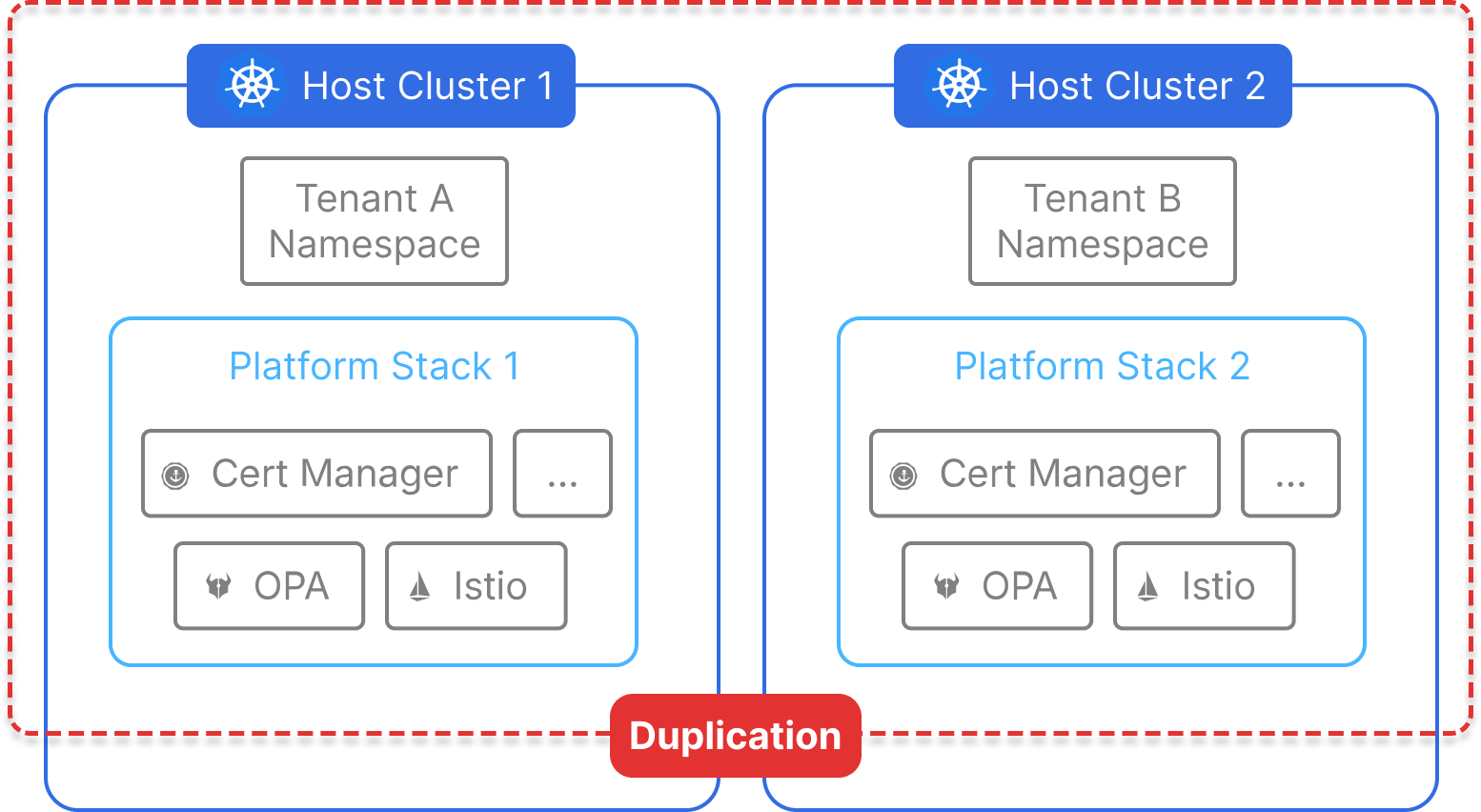

This isolation extends beyond compute—each tenant also gets independent CRDs, webhooks, admission controllers, and control plane components. But because every cluster runs the full stack of infrastructure services, the cost and complexity increase linearly with the number of tenants.

Why It’s Valuable

- Complete Isolation: No resource sharing means no cross-tenant impact.

- Compliance-Ready: Meets strict regulatory, audit, or contractual requirements.

- Tenant Autonomy: Each tenant can customize CRDs, networking, upgrade schedules, and tooling.

- Minimal Policy Enforcement Needed: Isolation is inherent, reducing the need for complex rules.

- Dedicated SLAs: Easy to implement per-tenant service level agreements.

Challenges with This Approach

- High Operational Overhead: Managing dozens or hundreds of clusters increases burden on platform teams.

- Cluster Sprawl: Platform components must be duplicated across every environment—CNI, CSI, monitoring, ingress, policy, etc.

- Poor Resource Utilization: Underused compute is common due to rigid resource boundaries.

- Slow Provisioning: Spinning up new clusters is slower and more resource-intensive than launching a virtual cluster.

- Difficult to Scale: As tenant count grows, automation, cost, and complexity become major concerns.

While this model works for high-security workloads, most teams can achieve similar benefits with less cost and complexity using vCluster.

Best-Fit Use Cases

- Regulated workloads requiring strict physical or organizational separation

- Enterprises with highly siloed teams or business units

- Multi-tenant SaaS vendors with per-customer compliance obligations

- Environments with per-tenant billing, upgrades, or platform customization

Isolation Characteristics

Compatibility

- ✅ Works with all Kubernetes-native tools and architectures

- ✅ Easy to enforce strict per-tenant customization

- ✅ Suitable for regulated environments and dedicated SLAs

- ❌ Inefficient at scale without extensive automation

- ❌ Duplicates platform components across clusters

- ❌ Slower to provision and more costly to maintain

Closing

There’s no one-size-fits-all model for Kubernetes multi-tenancy—but with vCluster, you don’t have to choose just one. You can combine tenancy models to suit different environments, teams, or workloads, and evolve your architecture over time.

Start with Namespace Syncing or Shared Nodes. Grow into Dedicated or Private Nodes. Leverage Auto Nodes to keep infrastructure efficient at every step.

And when you’re ready to abstract away the Kubernetes learning curve entirely, vCluster gives you the tools to create a seamless internal platform with fast, secure, tenant-aware virtual clusters at its core.

Ready to see it in action? Try vCluster today →

Full Comparison Table

Deploy your first virtual cluster today.